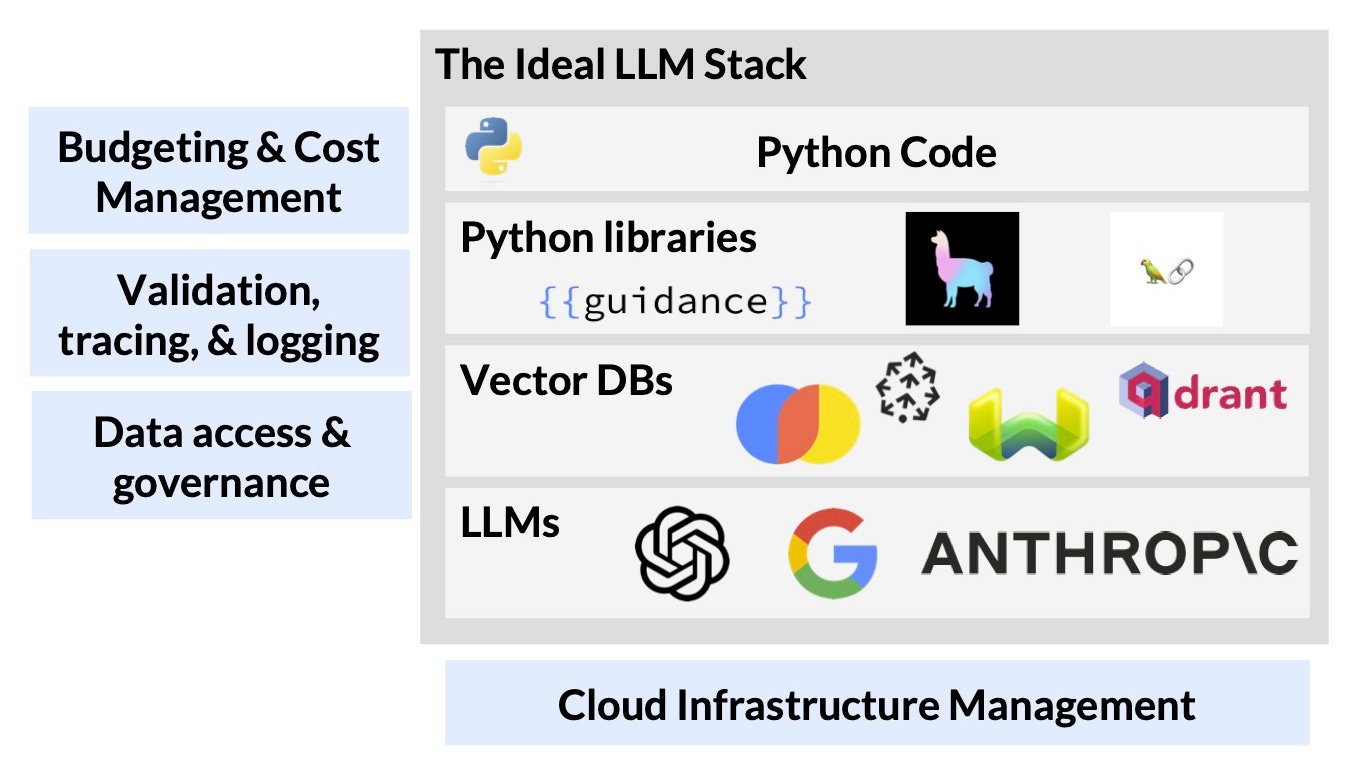

LLMs have brought thousands of developers into the machine learning world. The models themselves are, of course, impressive and key to the explosion of applications, but they’re only part of the picture. Beyond the model, you’ll also have to think about everything else that’s required to deliver a successful product.

“Everything else” is what’s required once you know you can use an LLM to build an application — deploying it to the cloud, accessing the right data, tracing inputs & outputs, and so on. The everything else turns out to be harder than you might think.

Deploying & Managing Applications

As with every piece of software, the cloud can be complicated. We won’t bore you with a rant about why Kubernetes sucks. (You probably have as many scars as we do.) But beyond the basics of cloud deployment, LLM-powered applications require managing the code, prompts, and configuration associated with your application.

Prompt templates are often Python strings declared as constants in code, and when a codebase is changing fast, it’s difficult or impossible to know what prompts were actually used. This situation is further complicated by continuously changing hosted models (which can drift in performance) that can break existing prompts at a cadence that often doesn’t align with code changes.

More detailed parameters like model temperature or embeddings vector size can also have a huge impact on application performance, which makes tracking and managing them a must. This problem gets even worse when these parameters are hardcoded (and unmodifiable) in library dependencies.

As applications mature, it might be uncommon to switch core infrastructure components in traditional software, but with the rate of innovation in LLMs, switching between model APIs or vector DBs is common. Without proper abstractions and infrastructure, switching between tools requires rewriting large parts of your application to use new APIs and new terminology. Different models will also perform differently, so you’ll need to track performance carefully – this is discussed in more detail below.

Navigating Cloud Resources

Most developers are building on hosted model APIs from the likes of OpenAI and Google, but some teams are fine-tuning custom models (for application performance) while others might choose to use open-source models (for data privacy & security).

Running these models is technically challenging (i.e., great for researchers like me but not so good for production). Beyond the basic challenges around allocating and managing GPUs (e.g., managing CUDA versions, installing drivers in containers), low availability and high costs quickly force you to optimize GPU usage.

Keeping costs down while delivering the best service often requires following cutting-edge research like Orca and (not to brag) some of the work from my group like AlpaServe and vLLM. Unfortunately, these techniques are conceptually complex (but also really interesting!) and require significant engineering work to adopt. (We may have left some details as an exercise for the reader.) This means you’ll have to allocate significant amounts of time to low-level system optimization instead of improving your application. Imagine if you had to patch your compiler every time you wanted to compile a new program!

Tracking Application Performance

One of the main challenges with LLMs is making sure the model does what it’s supposed to do. Hallucinations are a common failure mode, where the model generates plausible-sounding but incorrect or nonsensical outputs.

More generally, without careful prompting and data management, models can easily lead you astray. If you don’t provide enough context in your prompt, the model will give a generic or incorrect answer. If you provide too much or irrelevant information, you’ll confuse the model. This sort of like asking someone to read a phonebook and then asking them for the phone number of a particular person.

To get a real sense of model impact, many of the teams we’re working with are turning to business metrics (e.g., measuring CSAT for a customer service chatbot). Tying model outputs back to business metrics requires managing model parameters and prompts, properly connecting parameters to user interactions, and running statistically accurate experiments to understand business impact.

Access Control & Credential Management

LLM applications are most powerful when they have access to data specific to their task and can generate or synthesize information based on that context. This means applications will need access to private or proprietary data systems.

Getting the right data into the model is critical, but you will need to manage the credentials and access controls for multiple internal systems carefully. Applications will have to carefully track the users’ access privileges and ensure they don’t unintentionally leak private or sensitive information to the model or to other users (especially when fine-tuning). Today, a number of folks are reinventing the wheel here due to the lack of proper infrastructure.

Budgeting and Costs

Model APIs and cloud GPUs are expensive. As of this writing, renting a single machine with 8 A100s on AWS costs over $23k per-month if left running.

Hastily-written code (e.g., infinite loops, bad API calls) or poor infrastructure management can quickly run up huge bills. To develop applications responsibly, you need to be able to manage the code you’re running, its model usage, and the expenses associated with it.

As your application matures, you’ll likely want to set budgets for model usage and even configure fallback mechanisms. For example, once you exhaust your OpenAI budget for the month, you might fall back to using Google’s model until the end of the month.

More than just a model

Building LLM-powered applications isn’t just about the model. As with all software, you’ll have to think about data, cloud infrastructure, credentials, and cost, and the rapidly evolving nature of LLM applications amplifies those challenges. Of course, these models are powerful enough that it’s well worth the effort.

There’s a lot to be done to improve the developer ergonomics around building these applications. Our goal with RunLLM is to improve the developer experience and ergonomics for building LLM applications. If you’re interested in these problems, say hi!

Joey, Vikram - this is spot on. At Vectara one of our goals is to simplify this process (at least for retrieval-augmented-generation) by exposing a simple API, and hiding all this complexity from the developer.

Adaptation of the applications to the domain specific verticals and portability across the LLM providers are going to be interesting trends. Open source models at lesser cost of enterprise model might be a trend to watch out for. Thanks for bringing some great insights in each of your article and enriching the readers.